High-Load System Architecture: Handling a Million Requests per Second

One server — one death server. If your backend lives on a single machine, you're not building a product — you're building a time bomb. A high-load system is not about powerful hardware. It's about the right architecture.

Three Pillars of High-Load

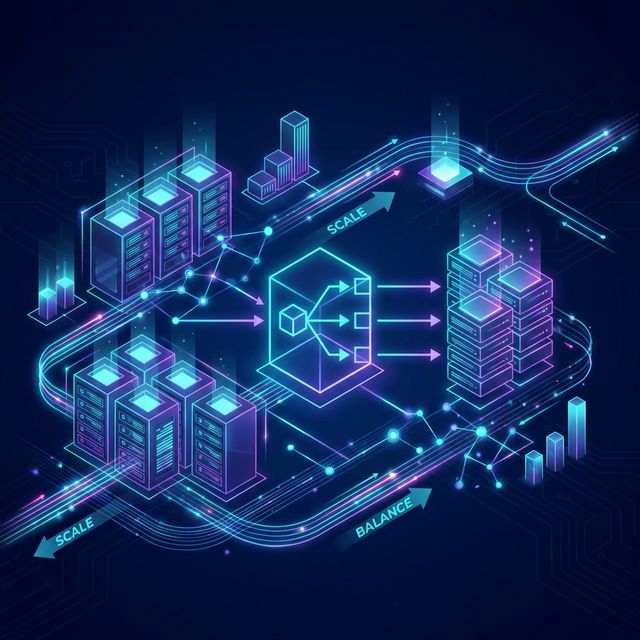

Fig 1. Load Balancer distributes traffic across a server pool

1. Horizontal Scaling (Scale Out)

Rule: Don't make one server more powerful — add more servers. This is a fundamental difference between vertical ($50,000 machine) and horizontal (10 × $500 machines) scaling.

- Pro: Infinite growth ceiling. One node fails — the rest keep working.

- Challenge: The application must be stateless. No storing sessions in process memory.

2. Load Balancer

A load balancer is a dispatcher. It accepts all incoming requests and distributes them to live nodes. Distribution algorithms: Round Robin, Least Connections. Nginx, HAProxy, AWS ALB — a choice for any budget.

3. Caching — The First Line of Defense

80% of requests in most services are repetitive. Redis or Memcached cache responses and reduce database load. The cache-aside rule: check cache first, on miss — go to the database and store the result in cache.

Asynchrony as a Philosophy

Not all tasks need to be executed synchronously. Email sending, report generation, image resizing — all this is placed in a queue (Kafka, RabbitMQ, SQS) and processed by workers in the background. The user doesn't wait — they get an instant response.

NineLab Tip: Start with a simple monolith, but design it so that services can be extracted easily. "Premature microservices" has killed more startups than high load.

Bottom line: A high-load system is not magic. It's load balancer + stateless app + Redis + queues + a well-designed database. Each layer relieves the next. That's exactly how Telegram, Avito, and Ozon work.

Related services

FAQ for this topic

Traffic shape and data rarely match prod. You need scenarios, the same metrics as prod, and gradual ramp with rollback.

Often DB/query plans, connection pools, synchronous external calls, and queues are the first suspects for a quick checklist.

Not necessarily: invalidation, cold starts, and key skew can hurt. Cache is designed around read models and SLOs.

When vertical scaling and query tuning hit a ceiling and data growth is predictable along a shard key.

Want to apply this in practice?

Tell us about your system — we’ll propose a work plan and the metrics worth fixing in an SLA/SLO.

Related articles

Excel Isn't Enough Anymore: 5 Signs Your Business Needs a Custom App

Clear signs your company has outgrown spreadsheets: accounting mistakes, chat-based approvals, lost requests, and no end-to-end visibility. Learn when it’s time to automate business processes and build an internal web app (portal, customer cabinet, ticketing workflow) that fits how your team actually works.

Read ArticleHow to DIY stress test your website and know when it will crash

Instructions on testing your site yourself: basic tools (k6, Apache Benchmark), common pitfalls, and a detailed breakdown of why online stores fall during ad campaigns.

Read ArticleSaaS Platform Development: Why Writing Code Is Only Half the Battle

The full cycle of SaaS product creation: from architecture design to server configuration for thousands of users. Why 90% of startups fail not because of code, but because of infrastructure.

Read ArticleHighLoad Architecture: From Monolith to Microservices

When is it time to split the monolith? Strategies for transitioning to microservice architecture without stopping business.

Read Article