High-Load Architecture: How to Build Systems That Don't Crash

Your system works great for 100 users. But what happens if TechCrunch writes about you tomorrow, and 100,000 come? Most startups die not from a bad idea, but from an inability to scale. High-Load Architecture is the art of building systems that grow with the business.

Vertical vs Horizontal: The Eternal Battle

There are two paths to growth. You can buy a "bigger server" (Scale Up) or buy "many small servers" (Scale Out).

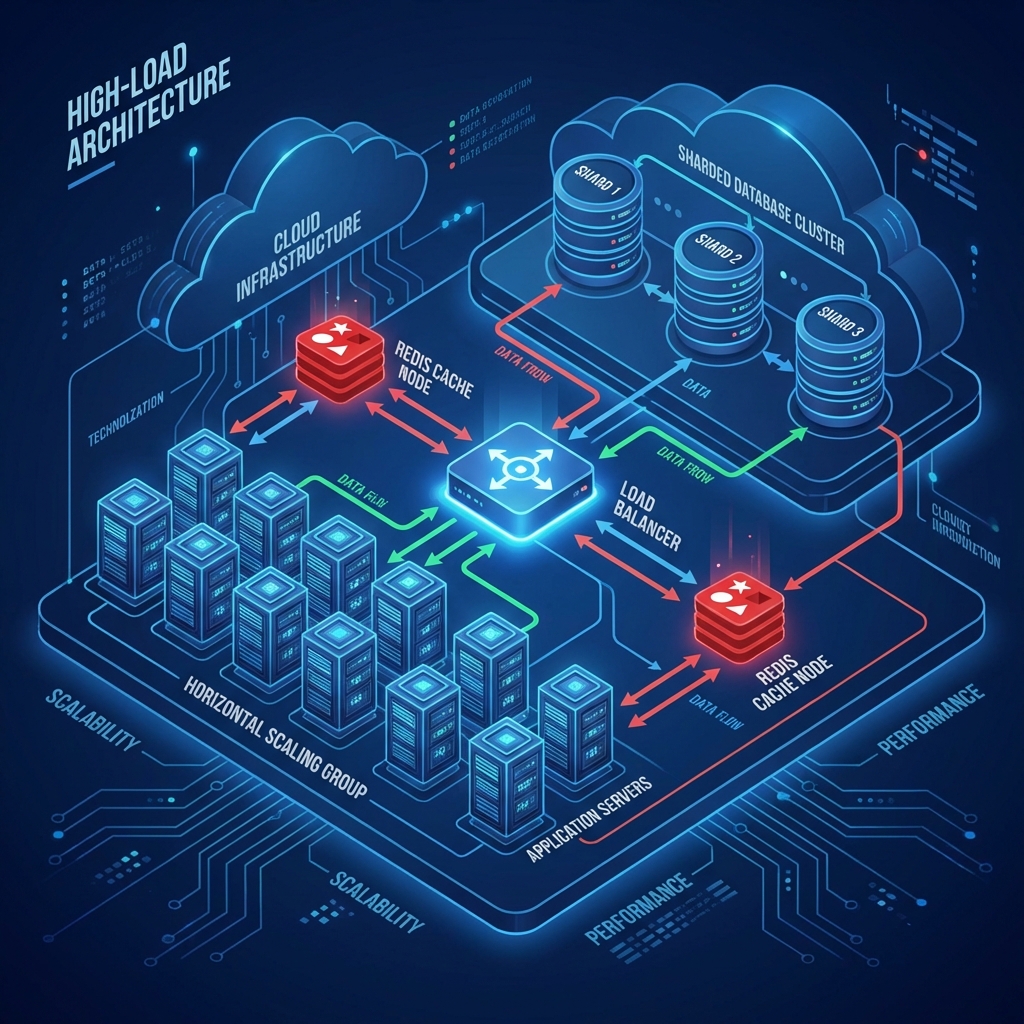

Fig 1. Typical Horizontal Scaling Scheme

Key Survival Patterns

- Load Balancing: Nginx or HAProxy at the entrance distributes requests. If one app server goes down, the balancer simply stops sending traffic to it. The user notices nothing.

- Database Replication: Master server writes data, Slave servers read. This unloads the database since 80% of web operations are reads.

- Sharding: When data becomes petabytes, one database won't cope. We "cut" the database into pieces (shards): users A-M on server 1, N-Z on server 2.

Caching: Don't Load the DB in Vain

The fastest request is the one that didn't happen. Store hot data in RAM (Redis/Memcached).

Rule: If data is requested often but changes rarely (user profile, product catalog) — its place is in the cache.

Asynchrony and Queues

The user clicked "Generate Report". This is a heavy operation. Don't make them wait with a spinning spinner. Send the task to a queue (RabbitMQ/Kafka), let a worker do it in the background, and tell the user: "We'll send a notification".

Conclusion: High-Load is not about expensive servers. It's about smart architecture where the failure of one component doesn't bring down the whole system (No Single Point of Failure).

Related services

FAQ for this topic

Traffic shape and data rarely match prod. You need scenarios, the same metrics as prod, and gradual ramp with rollback.

Often DB/query plans, connection pools, synchronous external calls, and queues are the first suspects for a quick checklist.

Not necessarily: invalidation, cold starts, and key skew can hurt. Cache is designed around read models and SLOs.

When vertical scaling and query tuning hit a ceiling and data growth is predictable along a shard key.

Want to apply this in practice?

Tell us about your system — we’ll propose a work plan and the metrics worth fixing in an SLA/SLO.

Related articles

Excel Isn't Enough Anymore: 5 Signs Your Business Needs a Custom App

Clear signs your company has outgrown spreadsheets: accounting mistakes, chat-based approvals, lost requests, and no end-to-end visibility. Learn when it’s time to automate business processes and build an internal web app (portal, customer cabinet, ticketing workflow) that fits how your team actually works.

Read ArticleHow to DIY stress test your website and know when it will crash

Instructions on testing your site yourself: basic tools (k6, Apache Benchmark), common pitfalls, and a detailed breakdown of why online stores fall during ad campaigns.

Read ArticleSaaS Platform Development: Why Writing Code Is Only Half the Battle

The full cycle of SaaS product creation: from architecture design to server configuration for thousands of users. Why 90% of startups fail not because of code, but because of infrastructure.

Read ArticleHigh-Load System Architecture: Handling a Million Requests per Second

Breaking down the principles of building systems that don't fail under load: horizontal scaling, load balancers, caches, and queues.

Read Article